Passions Play:

Tech Notes from Benjamin Turner

-

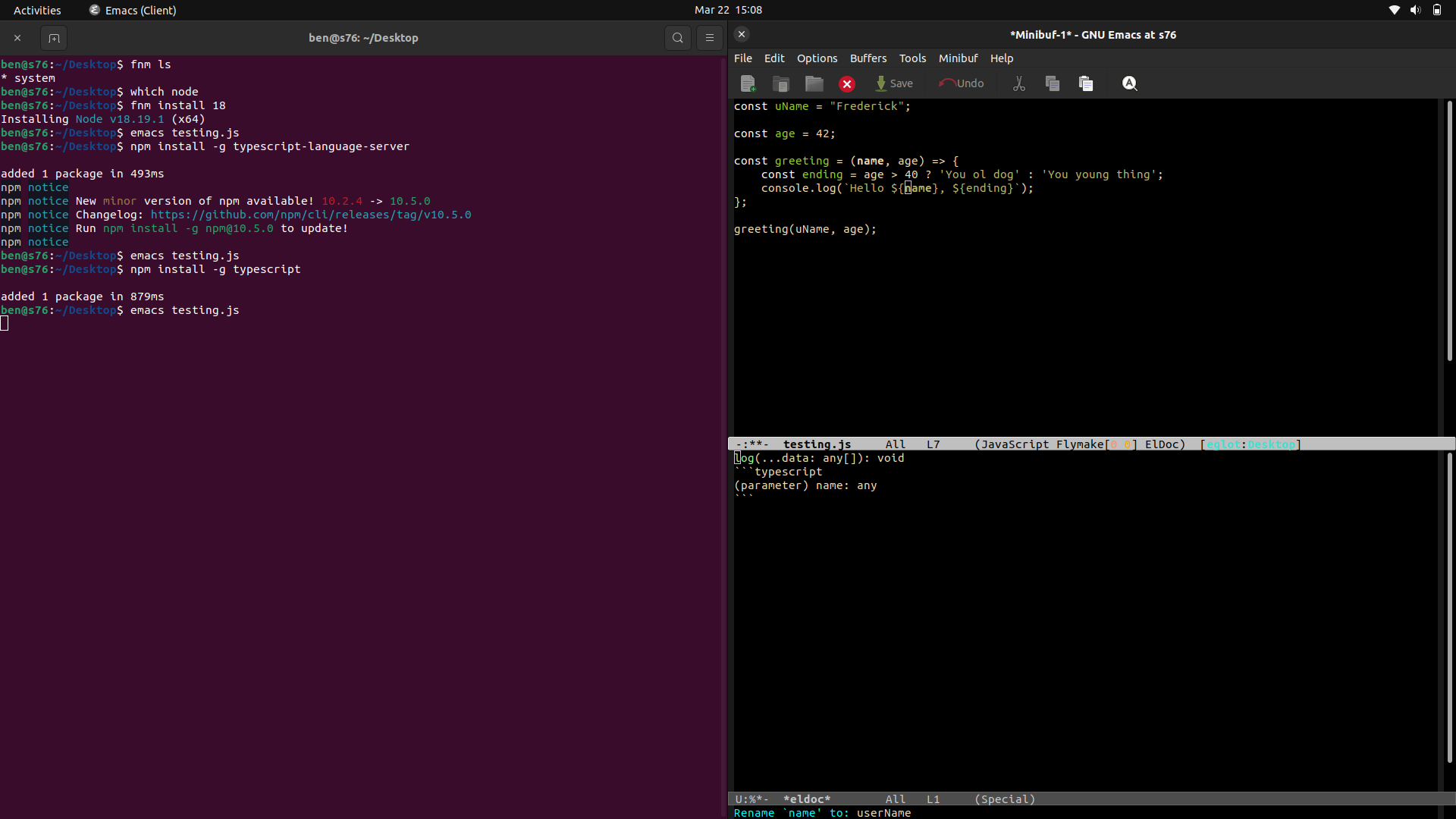

Quick Typescript Sandbox in Emacs

An Emacs function for quickly creating a Typescript sandbox configured with a custom compile script.

-

Compiling Emacs 29 on Ubuntu LTS 22.04

I’ve been on a kick to better understand my developer toolbox, and I recently decided to start by compiling Emacs from source on my trusty Ubuntu LTS 22.04. In the case of Emacs, my primary motivation was to explore the latest features bundled with version 29, such as tree-sitter and eglot. These two packages bundled…

-

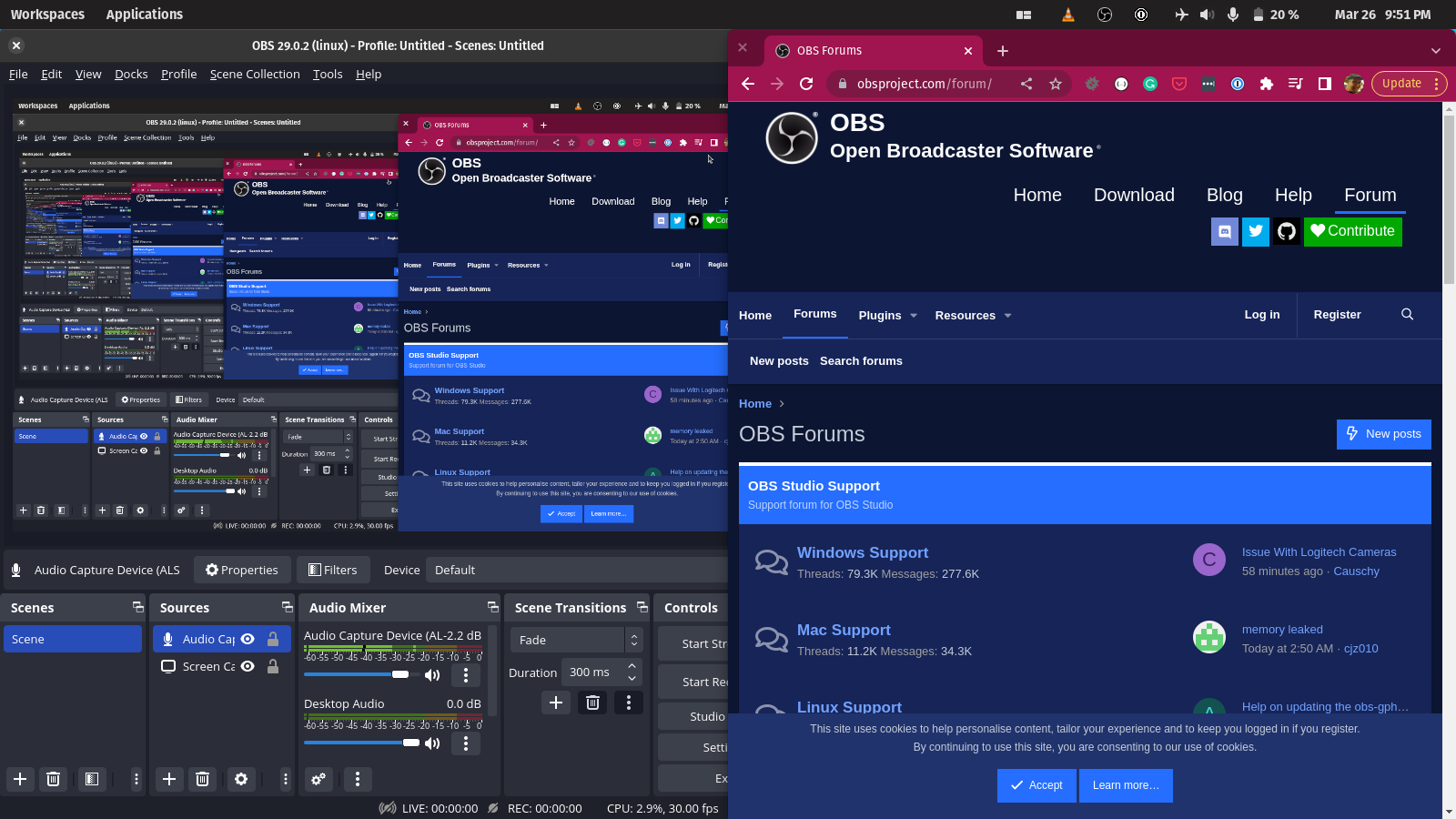

Installing OBS Studio on Linux

OBS Studio is a popular open-source video recording and live-streaming software. It’s available for Windows, macOS, and Linux operating systems. This blog post will focus on installing OBS Studio on Linux. There are several ways to install OBS Studio on Linux. One of the easiest methods is through the official OBS Studio PPA (Personal Package…

-

SVG recordings of Terminal Sessions

I recently stumbled on this cool technique from Wasim Lorgat for creating SVG documents of terminal sessions using asciinema and svg-term-cli:

-

QA FSE 15: Category Customization

I had some time to contribute to WP, and I’ve always loved how the Full-site-editing outreach program lets anyone jump in and quickly get to testing specific features and functionality. Today, I worked on QA’ing the latest one: FSE Program Testing Call #15: Category Customization Questions Did the experience crash at any point? No crashes!…

-

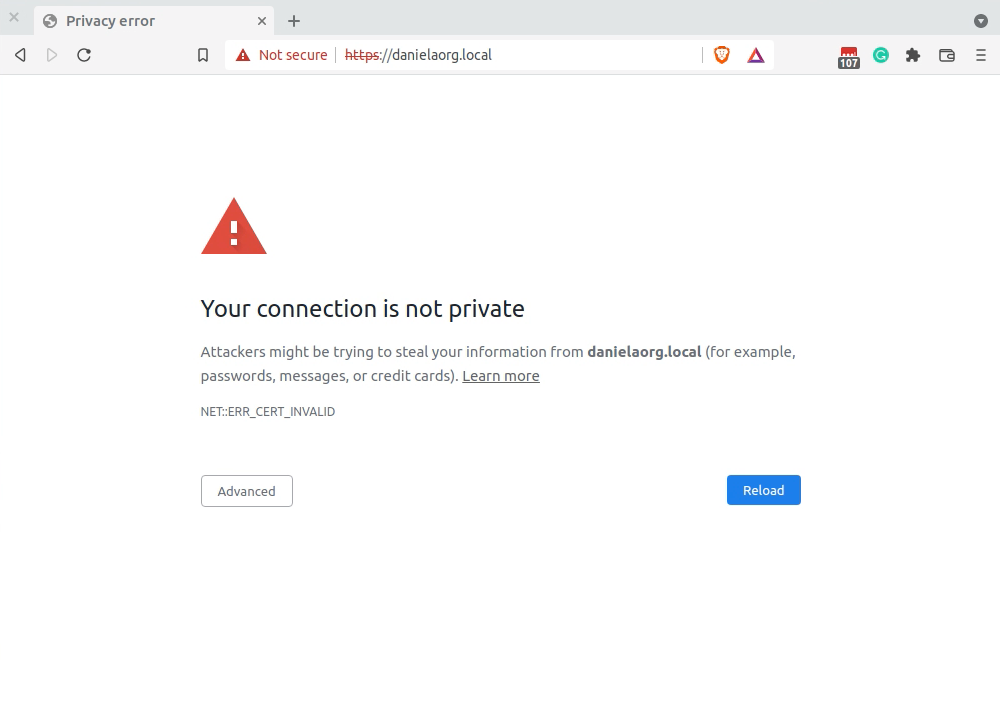

Fixing ERR_CERT_INVALID in Chrome

Resources and tools I used when researching an ERR_CERT_INVALID error within Google Chrome.

-

New Role: Software Engineer

I’m starting a new role as a Software Engineer, working with Javascript to build Local.

-

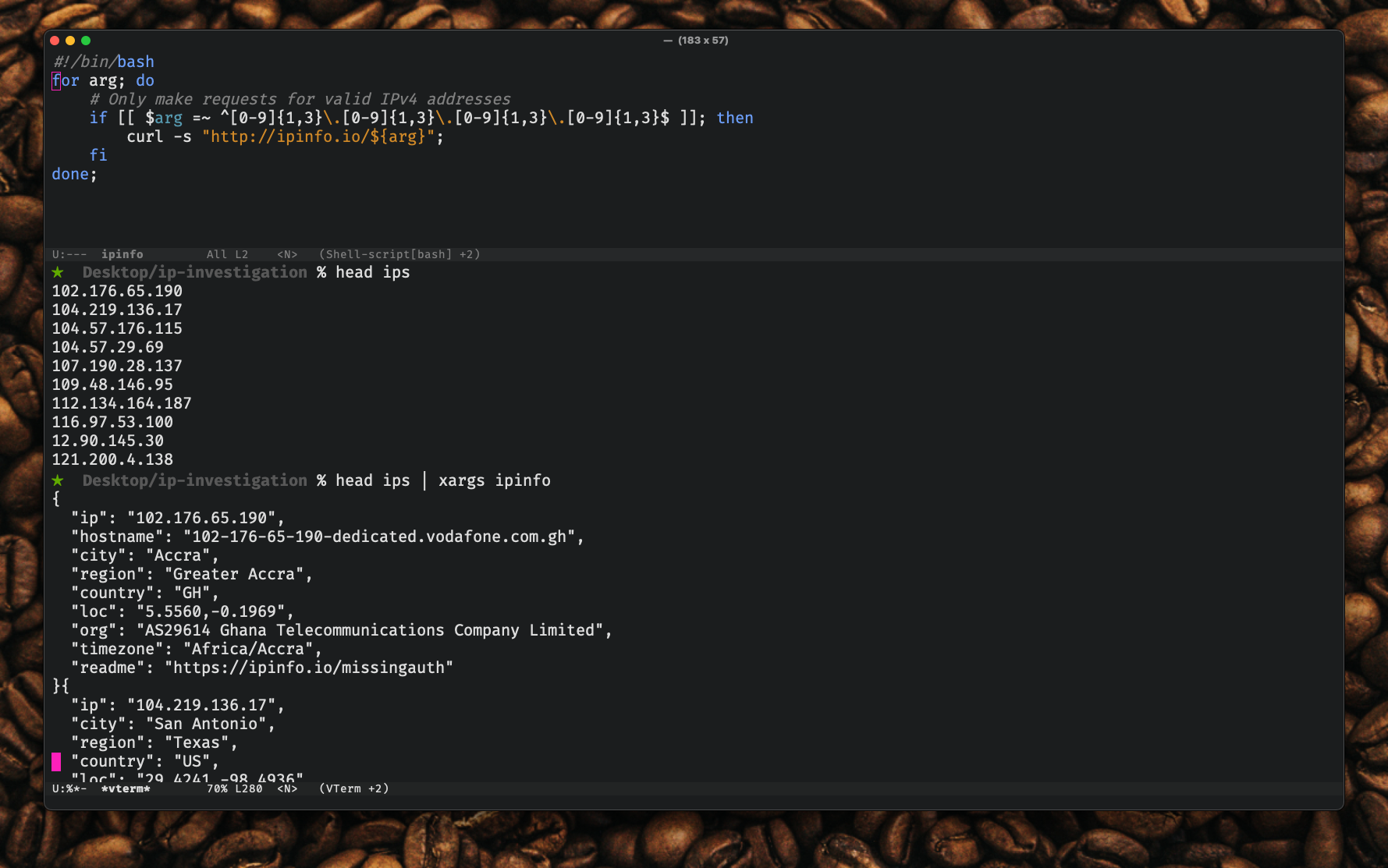

Scripted IP lookup in the terminal

Writing a Bash script to make it easier to do IP lookups from within the terminal.

-

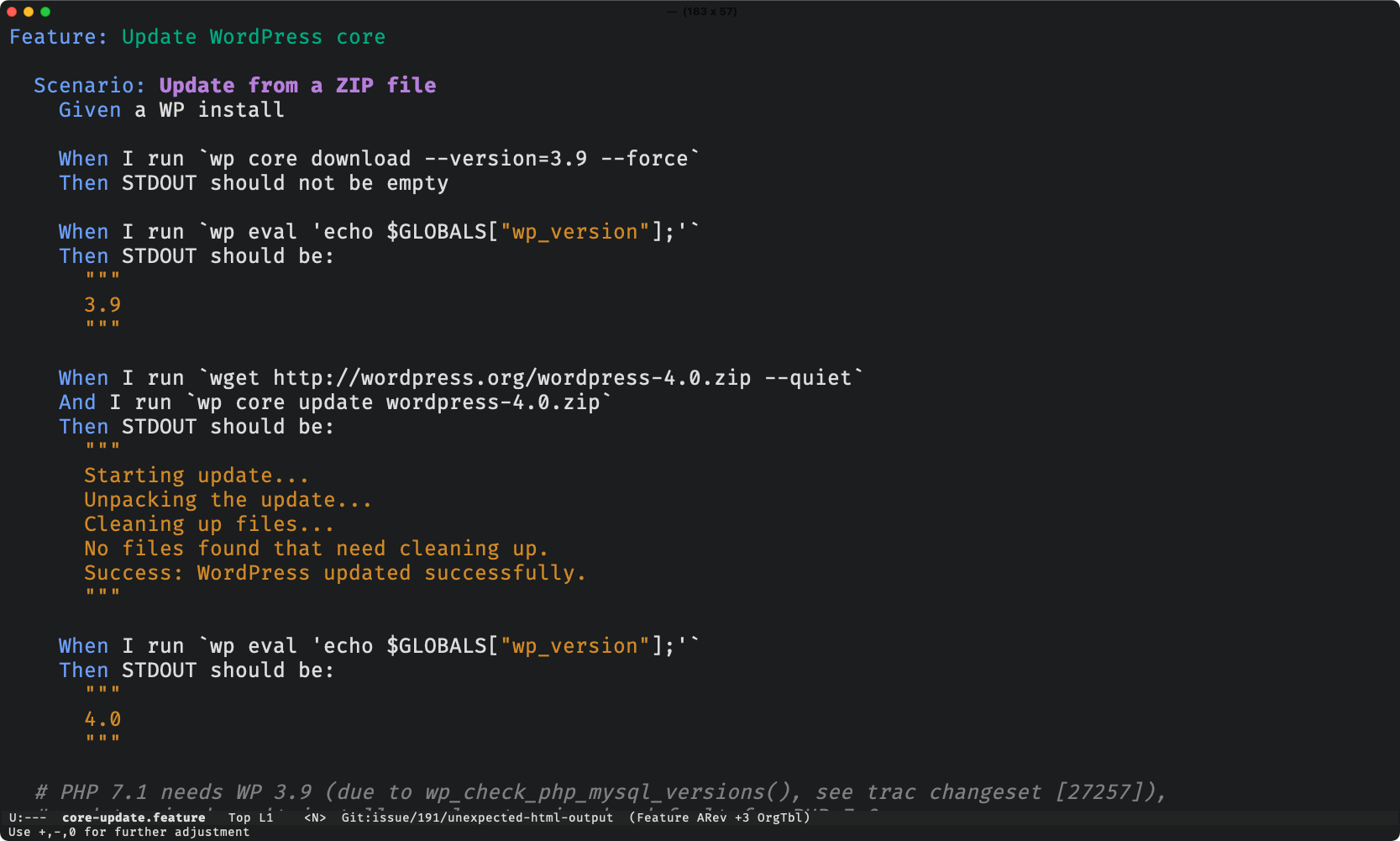

WPCLI Focus Time – 2021-10-20

Another block of focus time trying to help the WPCLI project. This time learning more about Behat and functional testing.

-

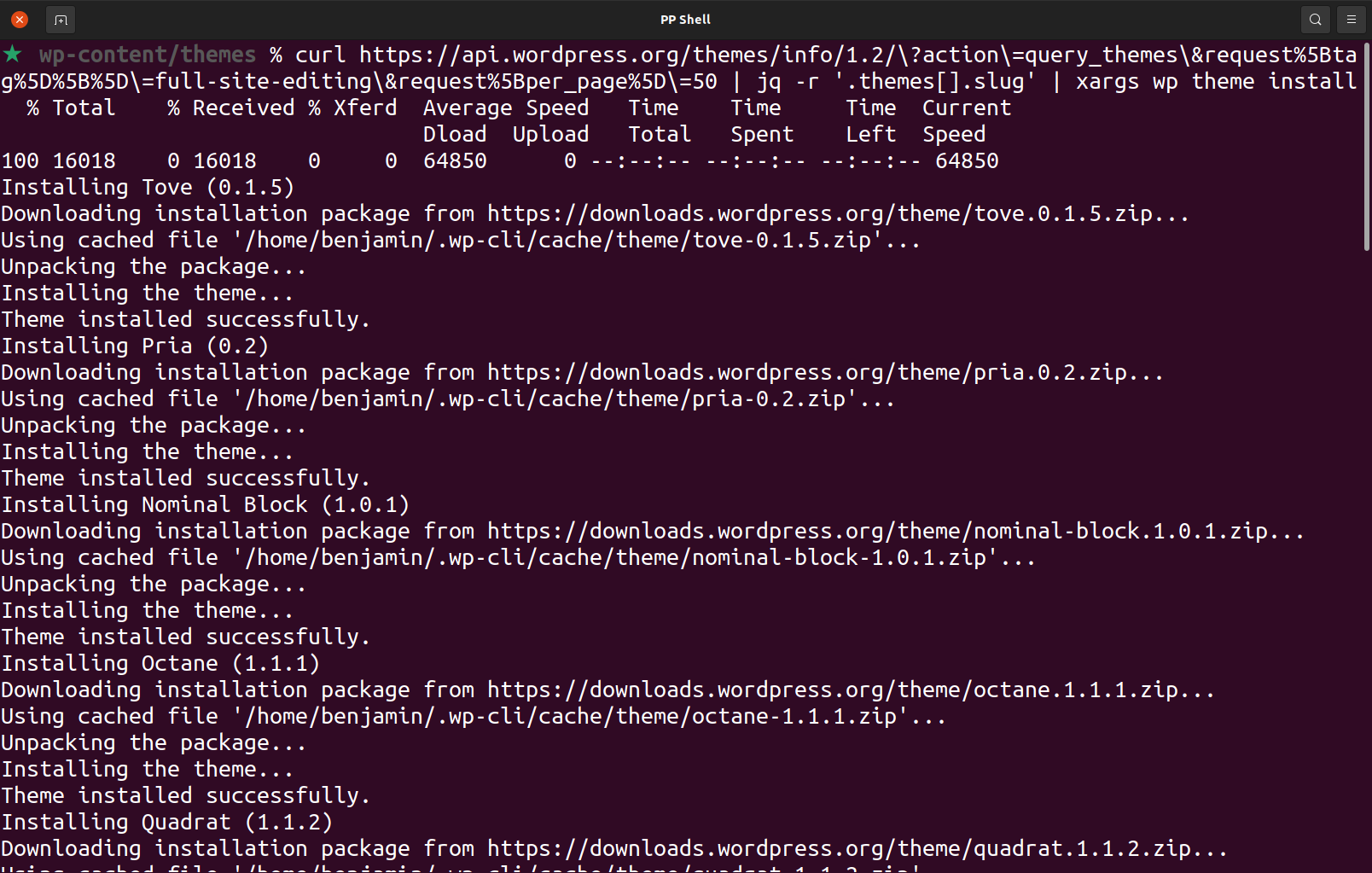

Installing Block-based themes with WPCLI

I wanted a way to quickly and easily install all Block-based WordPress themes so that I could start examining the code used to build them!